The education world has undergone a greater disruption as a result of the Covid-19 pandemic than at any other time in most of our lives.

When the outbreak struck, exam boards, including Cambridge International, swiftly developed alternative systems for awarding grades in the June 2020 series.

Our aim was to enable students to be able to get on with the rest of their lives, to not have their education held up by the pandemic.

At the same time, as an exam board, Cambridge International has a duty to ensure that our grades are fair. Fair to past, current and future students, but also fair to universities that accept students with a Cambridge education. We had to make sure there was a level playing field.

Our system for awarding grades in June 2020 is a collaborative one that relies on both teacher judgment and a statistical standardisation to make evidence-based decisions about grades for each candidate in each subject.

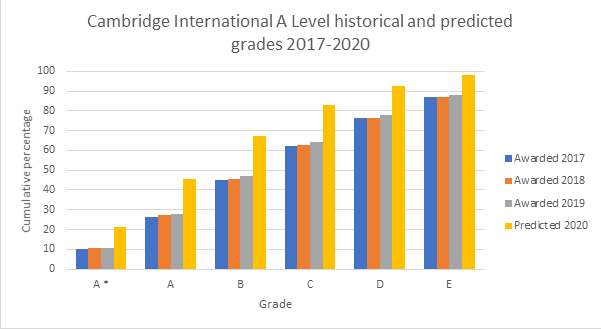

Comparing predicted grades with past results

We asked teachers worldwide to predict the grades their students would have achieved had they been able to sit the exams as usual. Then we checked the distribution of teacher predicted grades against the historical distribution of exam grades.

The question is: were the predicted grades in line with the performance of past cohorts?

Our analysis shows that predicted grades as a whole were higher than historical performance, by about half a grade on average.

Within that global average there was, of course, variation between schools – some of which predicted grades in line with historical performance and some of which were out by more than half a grade.

This is perfectly understandable, as teachers rightly want their students to succeed. They see what candidates can achieve in the classroom. It can be difficult to know the extent to which the same student can repeat that performance on other occasions. In these circumstances, it is understandable that teachers will often take a positive view.

Let’s take one example of where a teacher may overpredict. Imagine that a teacher is submitting their predicted grades for A Level maths. When it comes to a particular student, the teacher is trying to decide whether the student would have achieved a grade A or A* in the exam. The student has performed consistently well in class work, homework and mock exams, generally scoring enough marks to achieve a strong grade A and occasionally an A*. The teacher knows that the student works hard and decides to give the benefit of the doubt and predict an A*.

Of course, exams don’t give a benefit of the doubt in that way. An exam sorts students dispassionately either side of a line.

There are many other examples, but the point is this – when thousands of teachers in thousands of schools make similar decisions across hundreds of syllabuses, it starts to have cumulative effect.

Cumulative effect

We can quantify the difference between these teacher judgements and exam judgements by looking at statistics from the last three years. Here’s a graph showing predicted A Level grades for June 2020 – in yellow – compared with how students actually performed in exams from the previous three years.

In June 2017, 2018 and 2019, an average of about 10% of the grades awarded at A Level were A*. If we look at the predicted grades for the June 2020 series (the yellow bar), we can see that using predicted grades alone would give about 20% of all A Levels A* grades for this series. The pattern continues across the other grades. While we could expect to see some difference between the June 2020 cohort and previous cohorts, we would not expect to see a difference of this magnitude.

Why statistical standardisation?

If exam grades for the June 2020 series were decided on predicted grades alone, a noticeable proportion of students would achieve higher grades than in previous and future years. The risk of unfairness and undermining trust in the grades would be high.

That is why we have adopted a collaborative system that makes use of teacher’s professional judgement, but which also includes a statistical standardisation process.

The rank order created by teachers is crucial to the system. For each syllabus in each school, Cambridge International can assess how many students should achieve a particular grade. What we don’t know is who should receive those grades. For that, we use the rank order created by the teachers at the school.

The combination of teacher judgement and statistical standardisation will ensure that the grades we award in June 2020 are comparable to those we have awarded in past years, and are therefore fair to candidates from the past as well as to candidates in this series.

Using all of this data, we can predict that just over half of grades will be the same as predicted grades. Of the rest, some will be higher, but most will be lower. The vast majority of the grades we award will be within one grade of the predicted grade.

While a substantial number of students will receive one or more grades that are not their predicted grades, most will receive results that are broadly in line with teacher expectations, reflecting both the hard work of the students, and the professionalism and integrity of their schools.

Continuing educational journeys

We know many students would have preferred to take exams in the normal way this year, and many teachers, too. Since that option was taken off the table, we’ve been constantly astounded by how everyone in education has come together to meet the countless challenges imposed on us all by the pandemic.

Our aim in developing the system for awarding grades in this series was to allow as many students as possible to continue with their educational journeys, and do it in the fairest way possible. Thanks to the heroic efforts and expertise of teachers worldwide, and of colleagues here in Cambridge International, those journeys can start happening and students can get on with their lives.